Achieving Success in Data Migration

Repeatability, predictability and timeliness are the three technical factors that underpin a controlled go-live. BIDM keeps the business aligned while DM REVOLVE and WOM keep delivery visible.

Overview

Repeatable, predictable and timely delivery

A successful data migration hinges on excellent communication with clients, which is the core of our BIDM methodology. In addition, we emphasise three technical factors that contribute to success in any migration project: repeatability, predictability and timeliness.

These principles help the team prepare properly, rehearse the migration, and complete the go-live with confidence.

The three factors

Repeatability

Automate the steps and repeat them end-to-end as often as needed.

Predictability

Know the likely result before the final cutover and go-live.

Timeliness

Follow a realistic, fully scheduled runsheet for the migration window.

Method

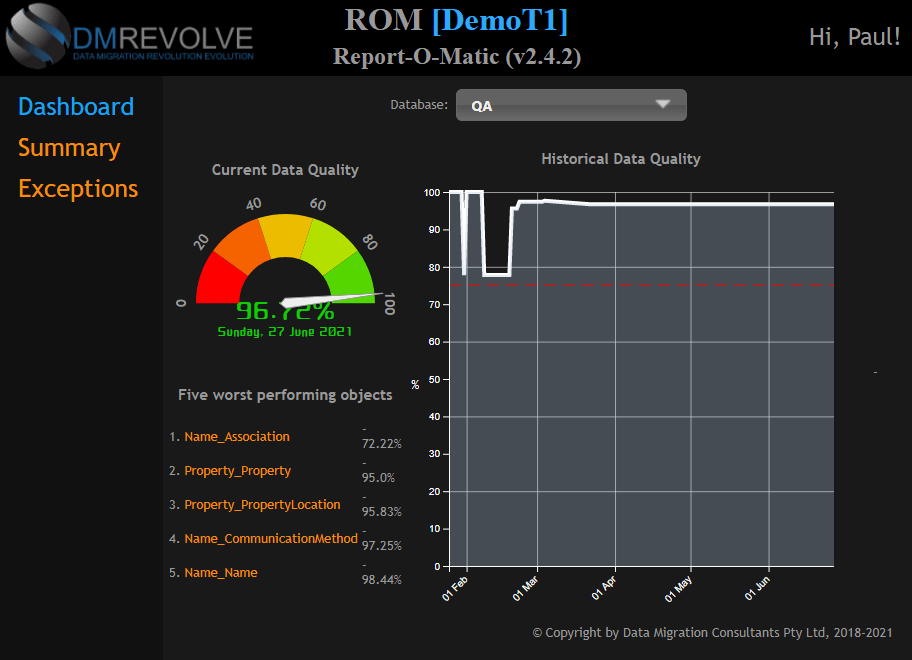

DM REVOLVE, plus our WOM scheduling and verification system, helps keep each stage visible and controlled from start to finish.

01 / Repeatability

Repeatable automation reduces delivery risk

Our proprietary data manipulation tools and automation techniques ensure that the data migration process is both repeatable and reliable. By consistently applying the same controls, we can run the data migration process end-to-end as often as needed and get dependable results every time.

- Automated source and target system refreshes

- Data extracts

- Script execution

- Data quality checking, tagging and loading

- Auditing and reporting

For critical systems with large data volumes, repeatable automated processes are essential.

Repeatable processes mitigate risk

02 / Predictability

Predictability starts before go-live

To guarantee the result of the final data migration, the main cut-over and system go-live deployment, the expected result should be known beforehand. That is difficult in practice because data and project conditions change continuously.

- The ever-changing nature of the data

- Unknown quality errors introduced before the final migration

- Target system changes

- Migration requirement changes

- Changes in project resources

Our methodology focuses on delivering a predictable outcome for businesses during the final migration and go-live event. Repeatability helps us encounter and address likely scenarios before go-live.

We work towards 100% predictability

03 / Timeliness

Timeliness is governed by the runsheet

In addition to repeatability and predictability, the timeframe of the go-live migration is critical to success. A thorough and accurate runsheet is vital, outlining the order of tasks and the time taken for each step.

- The order of all tasks to be completed

- The times taken for each task

All aspects of a data migration should be known before a go-live event begins. Without an accurate and comprehensive scheduled runsheet, the outcome of a project go-live cannot be predicted in advance.

A realistic go-live timescale that can be followed and achieved is essential

Explore the DM REVOLVE user interface

Browse the interface and toolset used to keep migrations visible, controlled and repeatable.

Next step

Bring repeatability, predictability and timeliness into one workflow

If you are planning a migration and want a delivery model that keeps the business aligned while controlling the technical process, the next step is a conversation with DataMC.